Image: Adobe Stock

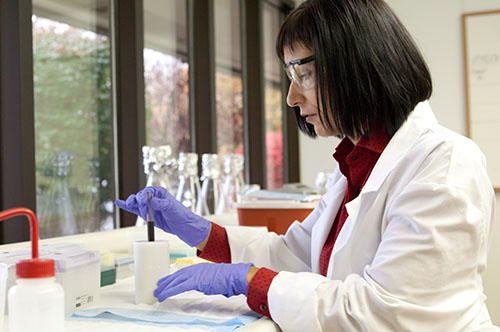

In March, the Penn State Center for Socially Responsible Artificial Intelligence awarded $93,000 to five research projects that consider the ethical and social implications of artificial intelligence. “We recognize that AI has the potential for great power,” says Dr. Prasenjit Mitra, director for the center. “Safeguards are needed. It’s important to get it right.”

Before joining Penn State as a professor for the College of Information Sciences and Technology, Dr. Mitra spent five years in Silicon Valley. He says his experiences shaped his view of socially-conscious AI.

“When I was younger, I remember thinking that maybe good sense would prevail as technology moved forward so quickly. Maybe the government would make laws that considered ethics and social responsibility. But over the years I’ve realized that no matter how proactive the government is, technology will always be faster, and very few people in government have a full understanding of all the ethical implications of new technology. We as academics need to be aware of any potential problems that exist, and be influencing policy makers and working toward solutions.”

In its first year, the Center for Socially Responsible Artificial Intelligence advances research on robust, socially responsible AI at Penn State and beyond, involving experts from a multitude of disciplines such as science, technology, humanities, business, policy and law.

The first five recipients of seed funding will be researching:

“We have more than 70 researchers at Penn State who are studying AI across many disciplines,” Mitra says. “Automation, smart cities, medicine, education, business… And Instead of just looking at the tech, we need to be looking at this holistically.”

He points to the 2019 lawsuit wherein the Department of Housing and Urban Development alleged that Facebook’s targeted advertising platform violated the Fair Housing Act, because it was “encouraging, enabling and causing” unlawful discrimination by restricting who can view housing ads.

Or, open Google and type in CEO, and go to Image Search. Even though 29% of leaders in senior management are women, only a fraction of the first page of resulting images show females.

“We need to do better,” Mitra says, because there is so much potential for good in the use of AI. “We are able to do drug simulations and development in months, when it used to take decades. Surgeons can use robots to perform surgeries hundreds of miles away, providing world-class care in places where specialized medical care is hard to obtain.”

On May 13, the center will be hosting a virtual symposium “AI in a Post-COVID World.” Attendance is free. You can find out about how to apply AI to your industry, meet others in related fields and learn what you can do together with the center. More information will be available shortly on the center’s event page.

Interested in exploring social responsibility and AI? Mitra says that they are actively looking for more collaborators in all fields, especially medicine and automation. “It’s for anyone who wants to talk, wants to bring an intern onto their team. These relationships between businesses and our team tend to be symbiotic. That’s what the center is here to do.”

Comments

Powered by WP LinkPress